Deadlocks and graph theory

When accessing a database through a series of transactions, a deadlock can occur any time. They are natural companions of transactions. We can decrease their likelihood by rigorously accessing the records and the tables always in the same order, but over a level of complexity we can never be 100% free of them. This is a fair price for the ACID properties. Deadlocks occur very rarely, and when this happens, the only thing to do is to repeat the transaction in the process which had been interrupted by the deadlock detection demon of the database. This is very small performance drawback compared e.g. to the drawback caused by logging.

So if deadlocks are so common why not to study their properties? This post is about to prove a simple property of most database systems by using the tools of graph theory.

Databases are accessed by multiple clients at the same time. Inside the database, a separate process exists for every client. These processes carry out their instructions. The processes are accessing resources (tables, records, indexes, etc.), and only one process can access a resource at a time, while the others have to wait until it finishes. When a process X has to wait for another process Y, we say that X is dependent of Y. This dependency ends when Y makes the resource free.

These things can be beautifully represented in the terms of graph theory. Let’s see a comparison table between the different terms of these two fields:

| Database system | Graph theory |

| Process | Vertex |

| Dependency | Directed edge, arch |

| Deadlock | Directed cycle |

| Maximum number of dependencies of the processes. | The maximum outdegree of the vertices. |

Here is a picture of a typical deadlock:

We will prove this property:

- In a transactional database system, two or more deadlock cycles are never connected to each other. They are always separated.

This statement is based on the fact, that in most transactional database systems, a process can be dependent of at most one other process. So they wait, instead of trying to carry out some other tasks meanwhile.

Now let’s translate these statements into the language of graph theory:

- In a connected, directed graph (digraph) G, if the maximum outdegree of the vertices is 1, then it can contain maximum one directed cycle.

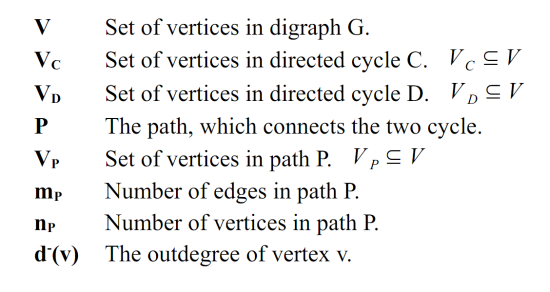

Here is a summary of the used symbols:

Proof by reductio ad absurdum

So we will prove the original statement by trying to deduce a contradiction from its negated form. Suppose we have 2 directed cycles inside G, their names are C and D.

[1] states formally that the maximum outdegree is 1

The vertices in the two cycles have minimum 1 outdegree, because all vertex is dominated by the next vertex in the cycle sequence. But because [1], the vertices in the cycles must have exactly 1 outdegree, as shown in [2] and [3]:

Because our graph G is connected, a simple path P must exist between the two cycles, which connects them. The path is also a sub-graph, and a well-known fact for sub-graphs is: the sum of all outdegrees of the vertices gives the number of edges [4]:

And for simple paths we also have a specific relation between its vertices and edges:

If we substitute the right side of [5] into the right side of [4], we get:

Which means: The sum of the outdegrees of all vertices inside a path is always equal to the number of vertices minus one.

For the final step we will use the method called: pigeonhole principle

The path P connects the two cycles, so its two endpoints are inside the cycles, in result the endpoints have 1 outdegree by default, and they cannot have more because of [1]. So we have np-2 free vertices and we have to distribute np-1 outdegree values between them. As a result of the pigeonhole principle we will have at least one vertex, which has at least 2 outdegree.

This contradicts with [1], so the original statement is proved.

![[7]](http://blog.bolner.hu/wp-content/uploads/2011/08/Clipboard071.png)

![[1]](http://blog.bolner.hu/wp-content/uploads/2011/08/Clipboard01.png)

![[2]](http://blog.bolner.hu/wp-content/uploads/2011/08/Clipboard02.png)

![[3]](http://blog.bolner.hu/wp-content/uploads/2011/08/Clipboard03.png)

![[4]](http://blog.bolner.hu/wp-content/uploads/2011/08/Clipboard04.png)

![[5]](http://blog.bolner.hu/wp-content/uploads/2011/08/Clipboard05.png)

![[6]](http://blog.bolner.hu/wp-content/uploads/2011/08/Clipboard06.png)